This sponsored article is dropped at you by the NYU Tandon School of Engineering.

For those who’ve ever realized to prepare dinner, you understand how daunting even easy duties could be at first. It’s a fragile dance of elements, motion, warmth, and strategies that newcomers want infinite observe to grasp.

However think about in case you had somebody – or one thing – to help you. Say, an AI assistant that might stroll you thru every thing you want to know and do to make sure that nothing is missed in real-time, guiding you to a stress-free scrumptious dinner.

Claudio Silva, director of the Visualization Imaging and Information Analytics (VIDA) Middle and professor of pc science and engineering and information science on the NYU Tandon Faculty of Engineering and NYU Middle for Information Science, is doing simply that. He’s main an initiative to develop an artificial intelligence (AI) “digital assistant” offering just-in-time visible and audio suggestions to assist with process execution.

And whereas cooking could also be part of the challenge to offer proof-of-concept in a low-stakes atmosphere, the work lays the muse to in the future be used for every thing from guiding mechanics by means of advanced restore jobs to fight medics performing life-saving surgical procedures on the battlefield.

“A guidelines on steroids”

The challenge is a part of a nationwide effort involving eight different institutional groups, funded by the Defense Advanced Research Projects Agency (DARPA) Perceptually-enabled Task Guidance (PTG) program. With the assist of a $5 million DARPA contract, the NYU group goals to develop AI applied sciences to assist folks carry out advanced duties whereas making these customers extra versatile by increasing their skillset — and more adept by decreasing their errors.

Claudio Silva is the co-director of the Visualization Imaging and Information Analytics (VIDA) Middle and professor of pc science and engineering on the NYU Tandon Faculty of Engineering and NYU Middle for Information Science.NYU Tandon

The NYU group – together with investigators from NYU Tandon’s Division of Laptop Science and Engineering, the NYU Middle for Information Science (CDS) and the Music and Audio Analysis Laboratory (MARL) – have been performing elementary analysis on information switch, perceptual grounding, perceptual consideration and person modeling to create a dynamic clever agent that engages with the person, responding to not solely circumstances however the person’s emotional state, location, surrounding circumstances and extra.

Dubbing it a “guidelines on steroids” Silva says that the challenge goals to develop Clear, Interpretable, and Multimodal Private Assistant (TIM), a system that may “see” and “hear” what customers see and listen to, interpret spatiotemporal contexts and supply suggestions by means of speech, sound and graphics.

Whereas the preliminary utility use-cases for the challenge for analysis functions concentrate on army functions comparable to aiding medics and helicopter pilots, there are numerous different eventualities that may profit from this analysis — successfully any bodily process.

“The imaginative and prescient is that when somebody is performing a sure operation, this clever agent wouldn’t solely information them by means of the procedural steps for the duty at hand, but additionally be capable of robotically monitor the method, and sense each what is occurring within the atmosphere, and the cognitive state of the person, whereas being as unobtrusive as attainable,” stated Silva.

The challenge brings collectively a workforce of researchers from throughout computing, together with visualization, human-computer interplay, augmented actuality, graphics, pc imaginative and prescient, pure language processing, and machine listening. It contains 14 NYU college and college students, with co-PIs Juan Bello, professor of pc science and engineering at NYU Tandon; Kyunghyun Cho, and He He, affiliate and assistant professors (respectively) of pc science and information science at NYU Courant and CDS, and Qi Solar, assistant professor of pc science and engineering at NYU Tandon and a member of the Middle for City Science + Progress will use the Microsoft Hololens 2 augmented actuality system because the {hardware} platform take a look at mattress for the challenge.

The challenge makes use of the Microsoft Hololens 2 augmented actuality system because the {hardware} platform testbed. Silva stated that, due to its array of cameras, microphones, lidar scanners, and inertial measurement unit (IMU) sensors, the Hololens 2 headset is a perfect experimental platform for Tandon’s proposed TIM system.

In constructing the know-how, Silva’s workforce turned to a selected process that required a number of visible evaluation, and may benefit from a guidelines based mostly system: cooking.

In constructing the know-how, Silva’s workforce turned to a selected process that required a number of visible evaluation, and may benefit from a guidelines based mostly system: cooking.

NYU Tandon

“Integrating Hololens will permit us to ship huge quantities of enter information to the clever agent we’re growing, permitting it to ‘perceive’ the static and dynamic atmosphere,” defined Silva, including that the quantity of information generated by the Hololens’ sensor array requires the mixing of a distant AI system requiring very excessive pace, tremendous low latency wi-fi connection between the headset and distant cloud computing.

To hone TIM’s capabilities, Silva’s workforce will practice it on a course of that’s without delay mundane and extremely depending on the right, step-by-step efficiency of discrete duties: cooking. A crucial component on this video-based coaching course of is to “train” the system to find the beginning and ending level — by means of interpretation of video frames — of every motion within the demonstration course of.

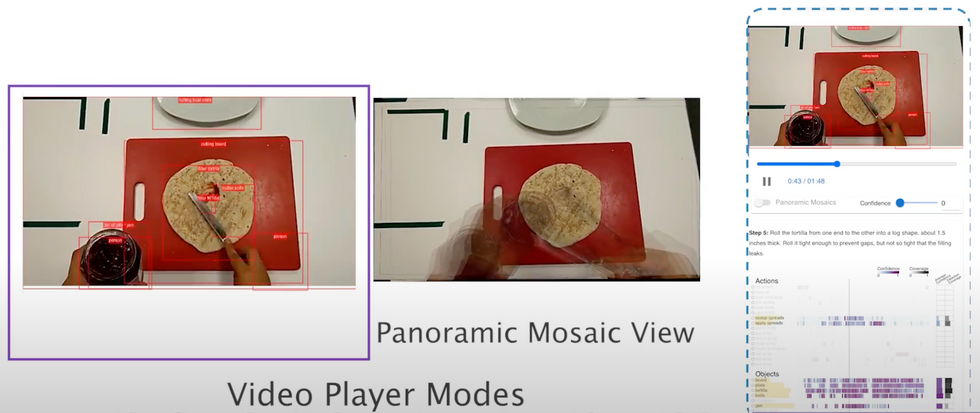

The workforce is already making enormous progress. Their first main paper “ARGUS: Visualization of AI-Assisted Task Guidance in AR” gained a Finest Paper Honorable Point out Award at IEEE VIS 2023. The paper proposes a visible analytics system they name ARGUS to assist the event of clever AR assistants.

The system was designed as a part of a multi year-long collaboration between visualization researchers and ML and AR consultants. It permits for on-line visualization of object, motion, and step detection in addition to offline evaluation of beforehand recorded AR classes. It visualizes not solely the multimodal sensor information streams but additionally the output of the ML fashions. This permits builders to achieve insights into the performer actions in addition to the ML fashions, serving to them troubleshoot, enhance, and high-quality tune the elements of the AR assistant.

“It’s conceivable that in 5 to 10 years these concepts will probably be built-in into nearly every thing we do.”

ARGUS, the interactive visible analytics device, permits for real-time monitoring and debugging whereas an AR system is in use. It lets builders see what the AR system sees and the way it’s deciphering the atmosphere and person actions. They will additionally regulate settings and report information for later evaluation.NYU Tandon

ARGUS, the interactive visible analytics device, permits for real-time monitoring and debugging whereas an AR system is in use. It lets builders see what the AR system sees and the way it’s deciphering the atmosphere and person actions. They will additionally regulate settings and report information for later evaluation.NYU Tandon

The place all issues information science and visualization occurs

Silva notes that the DARPA challenge, targeted as it’s on human-centered and data-intensive computing, is true on the heart of what VIDA does: make the most of superior information evaluation and visualization strategies to light up the underlying elements influencing a bunch of areas of crucial societal significance.

“Most of our present initiatives have an AI element and we have a tendency to construct techniques — such because the ARt Picture Exploration Area (ARIES) in collaboration with the Frick Assortment, the VisTrails information exploration system, or the OpenSpace challenge for astrographics, which is deployed at planetariums all over the world. What we make is admittedly designed for real-world functions, techniques for folks to make use of, relatively than as theoretical workout routines,” stated Silva.

“What we make is admittedly designed for real-world functions, techniques for folks to make use of, relatively than as theoretical workout routines.” —Claudio Silva, NYU Tandon

VIDA includes 9 full-time college members targeted on making use of the newest advances in computing and information science to resolve diversified data-related points, together with high quality, effectivity, reproducibility, and authorized and moral implications. The college, together with their researchers and college students, are serving to to offer key insights into myriad challenges the place massive information can inform higher future decision-making.

What separates VIDA from different teams of information scientists is that they work with information alongside the complete pipeline, from assortment, to processing, to evaluation, to actual world impacts. The members use their information in several methods — bettering public well being outcomes, analyzing city congestion, figuring out biases in AI fashions — however the core of their work all lies on this complete view of information science.

The middle has devoted amenities for constructing sensors, processing huge information units, and operating managed experiments with prototypes and AI fashions, amongst different wants. Different researchers on the college, typically blessed with information units and fashions too massive and sophisticated to deal with themselves, come to the middle for assist coping with all of it.

The VIDA workforce is rising, persevering with to draw distinctive college students and publishing information science papers and displays at a speedy clip. However they’re nonetheless targeted on their core aim: utilizing information science to have an effect on actual world change, from probably the most contained issues to probably the most socially harmful.

From Your Web site Articles

Associated Articles Across the Net